The Internet has revolutionized the computer and communications world like nothing before. The invention of the telegraph, telephone, radio, and computer set the stage for this unprecedented integration of capabilities. The Internet is at once a world-wide broadcasting capability, a mechanism for information dissemination, and a medium for collaboration and interaction between individuals and their computers without regard for geographic location. The Internet represents one of the most successful examples of the benefits of sustained investment and commitment to research and development of information infrastructure. Beginning with the early research in packet switching, the government, industry and academia have been partners in evolving and deploying this exciting new technology. Today, terms like “bleiner@computer.org” and “http://www.acm.org” trip lightly off the tongue of the random person on the street. 1

This is intended to be a brief, necessarily cursory and incomplete history. Much material currently exists about the Internet, covering history, technology, and usage. A trip to almost any bookstore will find shelves of material written about the Internet. 2

1 Perhaps this is an exaggeration based on the lead author’s residence in Silicon Valley.

2 On a recent trip to a Tokyo bookstore, one of the authors counted 14 English language magazines devoted to the Internet.

The

Internet protocol suite (TCP/IP) was developed by

Robert E. Kahn and

Vint Cerf in the 1970s and became the standard networking protocol on the ARPANET, incorporating concepts from the French CYCLADES project directed by

Louis Pouzin. In the early 1980s the NSF funded the establishment for national supercomputing centers at several universities, and provided interconnectivity in 1986 with the

NSFNET project, which also created network access to the

supercomputer sites in the United States from research and education organizations. Commercial

Internet service providers (ISPs) began to emerge in the very late 1980s. The ARPANET was decommissioned in 1990. Limited private connections to parts of the Internet by officially commercial entities emerged in several American cities by late 1989 and 1990,

[5] and the NSFNET was decommissioned in 1995, removing the last restrictions on the use of the Internet to carry commercial traffic.

In the 1980s, research at CERN in Switzerland by British computer scientist

Tim Berners-Leeresulted in the

World Wide Web, linking hypertext documents into an information system, accessible from any node on the network.

[6] Since the mid-1990s, the Internet has had a revolutionary impact on culture, commerce, and technology, including the rise of near-instant communication by

electronic mail,

instant messaging,

voice over Internet Protocol (VoIP) telephone calls,

two-way interactive video calls, and the

World Wide Web with its

discussion forums,

blogs,

social networking, and

online shopping sites. The research and education community continues to develop and use advanced networks such as

JANET in the United Kingdom and

Internet2 in the United States. Increasing amounts of data are transmitted at higher and higher speeds over fiber optic networks operating at 1 Gbit/s, 10 Gbit/s, or more. The Internet's takeover of the global communication landscape was almost instant in historical terms: it only communicated 1% of the information flowing through two-way

telecommunications networks in the year 1993, already 51% by 2000, and more than 97% of the telecommunicated information by 2007.

[7] Today the Internet continues to grow, driven by ever greater amounts of online information, commerce, entertainment, and

social networking. However, the future of the global internet may be shaped by regional differences in the world.

[8]

| Internet history timeline |

Early research and development:

Merging the networks and creating the Internet:

Commercialization, privatization, broader access leads to the modern Internet:

Examples of Internet services:

- 1989: AOL dial-up service provider, email, instant messaging, and web browser

- 1990: IMDb Internet movie database

- 1995: Amazon.com online retailer

- 1995: eBay online auction and shopping

- 1995: Craigslist classified advertisements

- 1996: Hotmail free web-based e-mail

- 1997: Babel Fish automatic translation

- 1998: Google Search

- 1998: Yahoo! Clubs (now Yahoo! Groups)

- 1998: PayPal Internet payment system

- 1999: Napster peer-to-peer file sharing

- 2001: BitTorrent peer-to-peer file sharing

- 2001: Wikipedia, the free encyclopedia

- 2003: LinkedIn business networking

- 2003: Myspace social networking site

- 2003: Skype Internet voice calls

- 2003: iTunes Store

- 2003: 4Chan Anonymous image-based bulletin board

- 2003: The Pirate Bay, torrent file host

- 2004: Facebook social networking site

- 2004: Podcast media file series

- 2004: Flickr image hosting

- 2005: YouTube video sharing

- 2005: Reddit link voting

- 2005: Google Earth virtual globe

- 2006: Twitter microblogging

- 2007: WikiLeaks anonymous news and information leaks

- 2007: Google Street View

- 2007: Kindle, e-reader and virtual bookshop

- 2008: Amazon Elastic Compute Cloud (EC2)

- 2008: Dropbox cloud-based file hosting

- 2008: Encyclopedia of Life, a collaborative encyclopedia intended to document all living species

- 2008: Spotify, a DRM-basedmusic streaming service

- 2009: Bing search engine

- 2009: Google Docs, Web-based word processor, spreadsheet, presentation, form, and data storage service

- 2009: Kickstarter, a threshold pledge system

- 2009: Bitcoin, a digital currency

- 2010: Instagram, photo sharing and social networking

- 2011: Google+, social networking

- 2011: Snapchat, photo sharing

- 2012: Coursera, massive open online courses

|

Precursors

The development of the

transistor was fundamental to a new generation of electronic devices that later effected almost every aspect of the human experience.

[9][10][11] The first transistor, a

point-contact transistor, was invented by

William Shockley,

Walter Houser Brattain and

John Bardeen at

Bell Labs in 1947.

[10] The

MOSFET (metal-oxide-silicon field-effect transistor), also known as the MOS transistor, was later invented by

Mohamed Atalla and

Dawon Kahng at Bell Labs in 1959.

[12][13][14] The MOSFET is the building block or "workhorse" of the

information revolution and the

information age,

[15][16][17] and the most widely manufactured device in history.

[18][19] MOS integrated circuits and

power MOSFETs drive the

computers and

communications infrastructure that enable the Internet.

[20][21][22] Along with computers, other essential elements of the Internet that are built from MOSFETs include

mobile devices,

tranceivers,

base stationmodules,

routers,

RF power amplifiers,

[23] microprocessors,

memory chips, and

telecommunication circuits.

[24]

Early computers had a central processing unit and remote terminals. As the technology evolved, new systems were devised to allow communication over longer distances (for terminals) or with higher speed (for interconnection of local devices) that were necessary for the

mainframe computer model. These technologies made it possible to exchange data (such as files) between remote computers. However, the point-to-point communication model was limited, as it did not allow for direct communication between any two arbitrary systems; a physical link was necessary. The technology was also considered unsafe for strategic and military use because there were no alternative paths for the communication in case of an enemy attack.

Development of wide area networking

With limited exceptions, the earliest computers were connected directly to terminals used by individual users, typically in the same building or site.

Wide area networks (WANs) emerged during the 1950s and became established during the 1960s.

Inspiration

A network of such centers, connected to one another by wide-band communication lines [...] the functions of present-day libraries together with anticipated advances in information storage and retrieval and symbiotic functions suggested earlier in this paper

In August 1962, Licklider and Welden Clark published the paper "On-Line Man-Computer Communication"

[26] which was one of the first descriptions of a networked future.

For each of these three terminals, I had three different sets of user commands. So if I was talking online with someone at S.D.C. and I wanted to talk to someone I knew at Berkeley or M.I.T. about this, I had to get up from the S.D.C. terminal, go over and log into the other terminal and get in touch with them....

I said, oh man, it's obvious what to do: If you have these three terminals, there ought to be one terminal that goes anywhere you want to go where you have interactive computing. That idea is the ARPAnet.

[28]

Although he left the IPTO in 1964, five years before the ARPANET went live, it was his vision of universal networking that provided the impetus for one of his successors,

Robert Taylor, to initiate the ARPANET development. Licklider later returned to lead the IPTO in 1973 for two years.

[29]

Development of packet switching

The issue of connecting separate physical networks to form one logical network was the first of many problems. Early networks used

message switched systems that required rigid routing structures prone to

single point of failure. In the 1960s,

Paul Baran of the

RAND Corporation produced a study of survivable networks for the U.S. military in the event of nuclear war.

[30] Information transmitted across Baran's network would be divided into what he called "message blocks".

[31] Independently,

Donald Davies (

National Physical Laboratory, UK), proposed and was the first to put into practice a local area network based on what he called

packet switching, the term that would ultimately be adopted.

Larry Roberts applied Davies' concepts of packet switching for the ARPANET wide area network,

[32][33] and sought input from Paul Baran and

Leonard Kleinrock. Kleinrock subsequently developed the mathematical theory behind the performance of this technology building on his earlier work on

queueing theory.

[34]

Packet switching is a rapid

store and forward networking design that divides messages up into arbitrary packets, with routing decisions made per-packet. It provides better bandwidth utilization and response times than the traditional circuit-switching technology used for telephony, particularly on resource-limited interconnection links.

[35]

Networks that led to the Internet

NPL network

By 1969 he had begun building the Mark I packet-switched network to meet the needs of the multidisciplinary laboratory and prove the technology under operational conditions.

[41][42][43] In 1976, 12 computers and 75 terminal devices were attached,

[44] and more were added until the network was replaced in 1986. NPL, followed by ARPANET, were the first two networks in the world to use packet switching,

[45][46] and were interconnected in the early 1970s.

ARPANET

"We set up a telephone connection between us and the guys at SRI ...", Kleinrock ... said in an interview: "We typed the L and we asked on the phone,

- "Do you see the L?"

- "Yes, we see the L," came the response.

- We typed the O, and we asked, "Do you see the O."

- "Yes, we see the O."

- Then we typed the G, and the system crashed ...

Yet a revolution had begun" ....

[48]

35 Years of the Internet, 1969–2004. Stamp of Azerbaijan, 2004.

ARPANET became the technical core of what would become the Internet, and a primary tool in developing the technologies used. The early ARPANET used the

Network Control Program (NCP, sometimes Network Control Protocol) rather than

TCP/IP. On January 1, 1983, known as

flag day, NCP on the ARPANET was replaced by the more flexible and powerful family of TCP/IP protocols, marking the start of the modern Internet.

[51]

International collaborations on ARPANET were sparse. For various political reasons, European developers were concerned with developing the

X.25 networks. Notable exceptions were the

Norwegian Seismic Array(

NORSAR) in 1972, followed in 1973 by Sweden with satellite links to the

Tanum Earth Station and

Peter Kirstein's research group in the UK, initially at the Institute of Computer Science, London University and later at

University College London.

[52]

Merit Network

The

Merit Network[53] was formed in 1966 as the Michigan Educational Research Information Triad to explore computer networking between three of Michigan's public universities as a means to help the state's educational and economic development.

[54] With initial support from the

State of Michigan and the

National Science Foundation (NSF), the packet-switched network was first demonstrated in December 1971 when an interactive host to host connection was made between the

IBM mainframe computer systems at the

University of Michigan in

Ann Arbor and

Wayne State University in

Detroit.

[55] In October 1972 connections to the

CDC mainframe at

Michigan State University in

East Lansing completed the triad. Over the next several years in addition to host to host interactive connections the network was enhanced to support terminal to host connections, host to host batch connections (remote job submission, remote printing, batch file transfer), interactive file transfer, gateways to the

Tymnet and

Telenet public data networks,

X.25 host attachments, gateways to X.25 data networks,

Ethernet attached hosts, and eventually

TCP/IP and additional

public universities in Michigan join the network.

[55][56] All of this set the stage for Merit's role in the

NSFNET project starting in the mid-1980s.

CYCLADES

The

CYCLADES packet switching network was a French research network designed and directed by

Louis Pouzin. First demonstrated in 1973, it was developed to explore alternatives to the early ARPANET design and to support network research generally. It was the first network to make the hosts responsible for reliable delivery of data, rather than the network itself, using

unreliable datagrams and associated end-to-end protocol mechanisms. Concepts of this network influenced later ARPANET architecture.

[57][58]

X.25 and public data networks

1974

ABC interview with

Arthur C. Clarke, in which he describes a future of ubiquitous networked personal computers.

Based on ARPA's research, packet switching network standards were developed by the

International Telecommunication Union (ITU) in the form of X.25 and related standards. While using

packet switching, X.25 is built on the concept of virtual circuits emulating traditional telephone connections. In 1974, X.25 formed the basis for the SERCnet network between British academic and research sites, which later became

JANET. The initial ITU Standard on X.25 was approved in March 1976.

[59]

Unlike ARPANET, X.25 was commonly available for business use.

Telenet offered its Telemail electronic mail service, which was also targeted to enterprise use rather than the general email system of the ARPANET.

The first public dial-in networks used asynchronous

TTY terminal protocols to reach a concentrator operated in the public network. Some networks, such as

CompuServe, used X.25 to multiplex the terminal sessions into their packet-switched backbones, while others, such as

Tymnet, used proprietary protocols. In 1979,

CompuServe became the first service to offer

electronic mail capabilities and technical support to personal computer users. The company broke new ground again in 1980 as the first to offer

real-time chat with its

CB Simulator. Other major dial-in networks were

America Online (AOL) and

Prodigy that also provided communications, content, and entertainment features. Many

bulletin board system (BBS) networks also provided on-line access, such as

FidoNet which was popular amongst hobbyist computer users, many of them

hackers and

amateur radio operators.

[citation needed]

UUCP and Usenet

In 1979, two students at

Duke University,

Tom Truscott and

Jim Ellis, originated the idea of using

Bourne shell scripts to transfer news and messages on a serial line

UUCP connection with nearby

University of North Carolina at Chapel Hill. Following public release of the software in 1980, the mesh of UUCP hosts forwarding on the Usenet news rapidly expanded. UUCPnet, as it would later be named, also created gateways and links between

FidoNet and dial-up BBS hosts. UUCP networks spread quickly due to the lower costs involved, ability to use existing leased lines,

X.25 links or even

ARPANET connections, and the lack of strict use policies compared to later networks like

CSNET and

Bitnet. All connects were local. By 1981 the number of UUCP hosts had grown to 550, nearly doubling to 940 in 1984. –

Sublink Network, operating since 1987 and officially founded in Italy in 1989, based its interconnectivity upon UUCP to redistribute mail and news groups messages throughout its Italian nodes (about 100 at the time) owned both by private individuals and small companies.

Sublink Network represented possibly one of the first examples of the Internet technology becoming progress through popular diffusion.

[61]

Merging the networks and creating the Internet (1973–95)

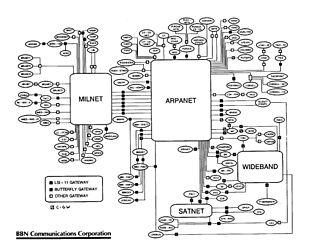

Map of the

TCP/IP test network in February 1982

TCP/IP

With so many different network methods, something was needed to unify them.

Robert E. Kahn of

DARPA and

ARPANET recruited

Vinton Cerf of

Stanford University to work with him on the problem. By 1973, they had worked out a fundamental reformulation, where the differences between network protocols were hidden by using a common

internetwork protocol, and instead of the network being responsible for reliability, as in the ARPANET, the hosts became responsible. Cerf credits

Hubert Zimmermann, Gerard LeLann and

Louis Pouzin (designer of the

CYCLADES network) with important work on this design.

[62]

The specification of the resulting protocol,

RFC 675 – Specification of Internet Transmission Control Program, by Vinton Cerf, Yogen Dalal and Carl Sunshine, Network Working Group, December 1974, contains the first attested use of the term

internet, as a shorthand for

internetworking; later RFCs repeat this use, so the word started out as an

adjective rather than the

noun it is today.

With the role of the network reduced to the bare minimum, it became possible to join almost any networks together, no matter what their characteristics were, thereby solving Kahn's initial problem. DARPA agreed to fund development of prototype software, and after several years of work, the first demonstration of a gateway between the

Packet Radio network in the SF Bay area and the ARPANET was conducted by the

Stanford Research Institute. On November 22, 1977 a three network demonstration was conducted including the ARPANET, the SRI's

Packet Radio Van on the Packet Radio Network and the Atlantic Packet Satellite network.

[63][64]

Stemming from the first specifications of TCP in 1974,

TCP/IP emerged in mid-late 1978 in nearly its final form, as used for the first decades of the Internet, known as "

IPv4".

[65] which is described in

IETF publication

RFC 791 (September 1981).

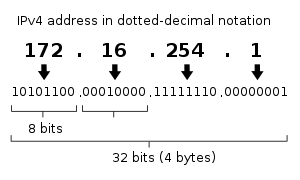

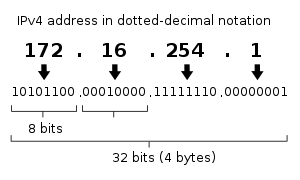

Decomposition of the quad-dotted IPv4 address representation to its

binary value

IPv4 uses 32-

bit addresses which limits the

address space to 2

32 addresses, i.e.

4294967296 addresses.

[65] The last available IPv4 address was assigned in January 2011.

[66] IPv4 is being replaced by its successor, called "

IPv6", which uses 128 bit addresses, providing 2

128 addresses, i.e.

340282366920938463463374607431768211456.

[67]This is a vastly increased address space. The shift to IPv6 is expected to take many years, decades, or perhaps longer, to complete, since there were four billion machines with IPv4 when the shift began.

[66]

The associated standards for IPv4 were published by 1981 as

RFCs 791, 792 and 793, and adopted for use. DARPA sponsored or encouraged the development of TCP/IP implementations for many operating systems and then scheduled a migration of all hosts on all of its packet networks to TCP/IP. On January 1, 1983, known as

flag day, TCP/IP protocols became the only approved protocol on the ARPANET, replacing the earlier

NCP protocol.

[68]

From ARPANET to NSFNET

After the ARPANET had been up and running for several years, ARPA looked for another agency to hand off the network to; ARPA's primary mission was funding cutting edge research and development, not running a communications utility. Eventually, in July 1975, the network had been turned over to the

Defense Communications Agency, also part of the

Department of Defense. In 1983, the

U.S. military portion of the ARPANET was broken off as a separate network, the

MILNET. MILNET subsequently became the unclassified but military-only

NIPRNET, in parallel with the SECRET-level

SIPRNET and

JWICS for TOP SECRET and above. NIPRNET does have controlled security gateways to the public Internet.

The networks based on the ARPANET were government funded and therefore restricted to noncommercial uses such as research; unrelated commercial use was strictly forbidden. This initially restricted connections to military sites and universities. During the 1980s, the connections expanded to more educational institutions, and even to a growing number of companies such as

Digital Equipment Corporation and

Hewlett-Packard, which were participating in research projects or providing services to those who were.

T3 NSFNET Backbone, c. 1992

NASA developed the TCP/IP based NASA Science Network (NSN) in the mid-1980s, connecting space scientists to data and information stored anywhere in the world. In 1989, the

DECnet-based Space Physics Analysis Network (SPAN) and the TCP/IP-based NASA Science Network (NSN) were brought together at NASA Ames Research Center creating the first multiprotocol wide area network called the NASA Science Internet, or NSI. NSI was established to provide a totally integrated communications infrastructure to the NASA scientific community for the advancement of earth, space and life sciences. As a high-speed, multiprotocol, international network, NSI provided connectivity to over 20,000 scientists across all seven continents.

In 1981 NSF supported the development of the

Computer Science Network (CSNET). CSNET connected with ARPANET using TCP/IP, and ran TCP/IP over

X.25, but it also supported departments without sophisticated network connections, using automated dial-up mail exchange.

In 1986, the NSF created

NSFNET, a 56 kbit/s

backbone to support the NSF-sponsored

supercomputing centers. The NSFNET also provided support for the creation of regional research and education networks in the United States, and for the connection of university and college campus networks to the regional networks.

[69] The use of NSFNET and the regional networks was not limited to supercomputer users and the 56 kbit/s network quickly became overloaded. NSFNET was upgraded to 1.5 Mbit/s in 1988 under a cooperative agreement with the

Merit Network in partnership with

IBM,

MCI, and the

State of Michigan. The existence of NSFNET and the creation of

Federal Internet Exchanges (FIXes) allowed the ARPANET to be decommissioned in 1990. NSFNET was expanded and upgraded to 45 Mbit/s in 1991, and was decommissioned in 1995 when it was replaced by backbones operated by several commercial

Internet Service Providers.

Transition towards the Internet

The term "internet" was adopted in the first RFC published on the TCP protocol (

RFC 675:

[70] Internet Transmission Control Program, December 1974) as an abbreviation of the term

internetworking and the two terms were used interchangeably. In general, an internet was any network using TCP/IP. It was around the time when ARPANET was interlinked with

NSFNET in the late 1980s, that the term was used as the name of the network, Internet, being the large and global TCP/IP network.

[71]

As interest in networking grew and new applications for it were developed, the Internet's technologies spread throughout the rest of the world. The network-agnostic approach in TCP/IP meant that it was easy to use any existing network infrastructure, such as the

IPSS X.25 network, to carry Internet traffic. In 1982, one year earlier than ARPANET, University College London replaced its transatlantic satellite links with TCP/IP over IPSS.

[72][73]

Many sites unable to link directly to the Internet created simple gateways for the transfer of electronic mail, the most important application of the time. Sites with only intermittent connections used

UUCP or

FidoNet and relied on the gateways between these networks and the Internet. Some gateway services went beyond simple mail peering, such as allowing access to

File Transfer Protocol (FTP) sites via UUCP or mail.

[74]

TCP/IP goes global (1980s)

CERN, the European Internet, the link to the Pacific and beyond

Between 1984 and 1988

CERN began installation and operation of

TCP/IP to interconnect its major internal computer systems, workstations, PCs and an accelerator control system. CERN continued to operate a limited self-developed system (CERNET) internally and several incompatible (typically proprietary) network protocols externally. There was considerable resistance in Europe towards more widespread use of

TCP/IP, and the CERN TCP/IP intranets remained isolated from the Internet until 1989.

In 1988, Daniel Karrenberg, from

Centrum Wiskunde & Informatica (CWI) in

Amsterdam, visited Ben Segal,

CERN's TCP/IP Coordinator, looking for advice about the transition of the European side of the UUCP Usenet network (much of which ran over X.25 links) over to TCP/IP. In 1987, Ben Segal had met with

Len Bosack from the then still small company

Cisco about purchasing some TCP/IP routers for CERN, and was able to give Karrenberg advice and forward him on to Cisco for the appropriate hardware. This expanded the European portion of the Internet across the existing UUCP networks, and in 1989 CERN opened its first external TCP/IP connections.

[76] This coincided with the creation of Réseaux IP Européens (

RIPE), initially a group of IP network administrators who met regularly to carry out coordination work together. Later, in 1992, RIPE was formally registered as a

cooperative in Amsterdam.

At the same time as the rise of internetworking in Europe, ad hoc networking to ARPA and in-between Australian universities formed, based on various technologies such as X.25 and

UUCPNet. These were limited in their connection to the global networks, due to the cost of making individual international UUCP dial-up or X.25 connections. In 1989, Australian universities joined the push towards using IP protocols to unify their networking infrastructures.

AARNet was formed in 1989 by the

Australian Vice-Chancellors' Committee and provided a dedicated IP based network for Australia.

The Internet began to penetrate Asia in the 1980s. In May 1982

South Korea became the second country to successfully set up TCP/IP IPv4 network.

[77][78] Japan, which had built the UUCP-based network

JUNET in 1984, connected to

NSFNET in 1989. It hosted the annual meeting of the

Internet Society, INET'92, in

Kobe.

Singapore developed TECHNET in 1990, and

Thailand gained a global Internet connection between Chulalongkorn University and UUNET in 1992.

[79]

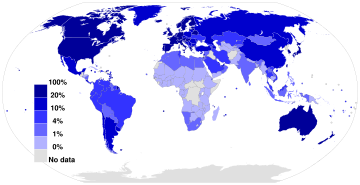

The early global "digital divide" emerges

While developed countries with technological infrastructures were joining the Internet, developing countries began to experience a

digital divide separating them from the Internet. On an essentially continental basis, they are building organizations for Internet resource administration and sharing operational experience, as more and more transmission facilities go into place.

Africa

At the beginning of the 1990s, African countries relied upon X.25

IPSS and 2400 baud modem UUCP links for international and internetwork computer communications.

In August 1995, InfoMail Uganda, Ltd., a privately held firm in Kampala now known as InfoCom, and NSN Network Services of Avon, Colorado, sold in 1997 and now known as Clear Channel Satellite, established Africa's first native TCP/IP high-speed satellite Internet services. The data connection was originally carried by a C-Band RSCC Russian satellite which connected InfoMail's Kampala offices directly to NSN's MAE-West point of presence using a private network from NSN's leased ground station in New Jersey. InfoCom's first satellite connection was just 64 kbit/s, serving a Sun host computer and twelve US Robotics dial-up modems.

Africa is building an Internet infrastructure.

AFRINIC, headquartered in

Mauritius, manages IP address allocation for the continent. As do the other Internet regions, there is an operational forum, the Internet Community of Operational Networking Specialists.

[83]

There are many programs to provide high-performance transmission plant, and the western and southern coasts have undersea optical cable. High-speed cables join North Africa and the Horn of Africa to intercontinental cable systems. Undersea cable development is slower for East Africa; the original joint effort between

New Partnership for Africa's Development (NEPAD) and the East Africa Submarine System (Eassy) has broken off and may become two efforts.

[84]

Asia and Oceania

The

Asia Pacific Network Information Centre (APNIC), headquartered in Australia, manages IP address allocation for the continent. APNIC sponsors an operational forum, the Asia-Pacific Regional Internet Conference on Operational Technologies (APRICOT).

[85]

South Korea's first Internet system, the System Development Network (SDN) began operation on 15 May 1982. SDN was connected to the rest of the world in August 1983 using UUCP (Unixto-Unix-Copy); connected to CSNET in December 1984; and formally connected to the U.S. Internet in 1990.

[86]

In 1991, the People's Republic of China saw its first

TCP/IP college network,

Tsinghua University's TUNET. The PRC went on to make its first global Internet connection in 1994, between the Beijing Electro-Spectrometer Collaboration and

Stanford University's Linear Accelerator Center. However, China went on to implement its own digital divide by implementing a country-wide

content filter.

[87]

Latin America

Rise of the global Internet (late 1980s/early 1990s onward)

Initially, as with its predecessor networks, the system that would evolve into the Internet was primarily for government and government body use.

However, interest in commercial use of the Internet quickly became a commonly debated topic. Although commercial use was forbidden, the exact definition of commercial use was unclear and subjective.

UUCPNet and the X.25 IPSS had no such restrictions, which would eventually see the official barring of UUCPNet use of

ARPANET and

NSFNET connections. (Some UUCP links still remained connecting to these networks however, as administrators cast a blind eye to their operation.)

[citation needed]

In 1992, the U.S. Congress passed the Scientific and Advanced-Technology Act,

42 U.S.C. § 1862(g), which allowed NSF to support access by the research and education communities to computer networks which were not used exclusively for research and education purposes, thus permitting NSFNET to interconnect with commercial networks.

[90][91] This caused controversy within the research and education community, who were concerned commercial use of the network might lead to an Internet that was less responsive to their needs, and within the community of commercial network providers, who felt that government subsidies were giving an unfair advantage to some organizations.

[92]

By 1990, ARPANET's goals had been fulfilled and new networking technologies exceeded the original scope and the project came to a close. New network service providers including

PSINet,

Alternet, CERFNet, ANS CO+RE, and many others were offering network access to commercial customers.

NSFNET was no longer the de facto backbone and exchange point of the Internet. The

Commercial Internet eXchange (CIX),

Metropolitan Area Exchanges (MAEs), and later

Network Acess Points (NAPs) were becoming the primary interconnections between many networks. The final restrictions on carrying commercial traffic ended on April 30, 1995 when the National Science Foundation ended its sponsorship of the NSFNET Backbone Service and the service ended.

[93][94] NSF provided initial support for the NAPs and interim support to help the regional research and education networks transition to commercial ISPs. NSF also sponsored the

very high speed Backbone Network Service (vBNS) which continued to provide support for the supercomputing centers and research and education in the United States.

[95]

World Wide Web and introduction of browsers

Precursors to the web browser emerged in the form of

hyperlinked applications during the mid and late 1980s (the bare concept of hyperlinking had by then existed for some decades). Following these,

Tim Berners-Lee is credited with inventing the World Wide Web in 1989 and developing in 1990 both the first

web server, and the first web browser, called

WorldWideWeb (no spaces) and later renamed Nexus.

[100] Many others were soon developed, with

Marc Andreessen's 1993

Mosaic (later

Netscape),

[101] being particularly easy to use and install, and often credited with sparking the internet boom of the 1990s.

[102] Today, the major web browsers are

Firefox,

Internet Explorer,

Google Chrome,

Opera and

Safari.

[103]

A boost in web users was triggered in September 1993 by

NCSA Mosaic, a graphical browser which eventually ran on several popular office and home computers.

[104] This was the first web browser aiming to bring multimedia content to non-technical users, and therefore included images and text on the same page, unlike previous browser designs;

[105] its founder,

Marc Andreessen, also established the company that in 1994, released

Netscape Navigator, which resulted in one of the early

browser wars, when it ended up in a competition for dominance (which it lost) with

Microsoft Windows'

Internet Explorer. Commercial use restrictions were lifted in 1995. The online service America Online (AOL) offered their users a connection to the Internet via their own internal browser.

Use in wider society 1990s to early 2000s (Web 1.0)

During the first decade or so of the public internet, the immense changes it would eventually enable in the 2000s were still nascent. In terms of providing context for this period,

mobile cellular devices ("smartphones" and other cellular devices) which today provide near-universal access, were used for business and not a routine household item owned by parents and children worldwide.

Social media in the modern sense had yet to come into existence, laptops were bulky and most households did not have computers. Data rates were slow and most people lacked means to video or digitize video; media storage was transitioning slowly from

analog tape to

digital optical discs (

DVD and to an extent still,

floppy disc to

CD). Enabling technologies used from the early 2000s such as

PHP, modern

JavaScript and

Java, technologies such as

AJAX,

HTML 4 (and its emphasis on

CSS), and various

software frameworks, which enabled and simplified speed of web development, largely awaited invention and their eventual widespread adoption.

The Internet was widely used for

mailing lists,

emails,

e-commerce and early popular

online shopping (

Amazon and

eBay for example),

online forums and

bulletin boards, and personal websites and

blogs, and use was growing rapidly, but by more modern standards the systems used were static and lacked widespread social engagement. It awaited a number of events in the early 2000s to change from a communications technology to gradually develop into a key part of global society's infrastructure.

The changes that would propel the Internet into its place as a social system took place during a relatively short period of no more than five years, starting from around 2004. They included:

- The call to "Web 2.0" in 2004 (first suggested in 1999),

- Accelerating adoption and commoditization among households of, and familiarity with, the necessary hardware (such as computers).

- Accelerating storage technology and data access speeds – hard drives emerged, took over from far smaller, slower floppy discs, and grew from megabytes to gigabytes (and by around 2010, terabytes), RAM from hundreds of kilobytes to gigabytes as typical amounts on a system, and Ethernet, the enabling technology for TCP/IP, moved from common speeds of kilobits to tens of megabits per second, to gigabits per second.

- High speed Internet and wider coverage of data connections, at lower prices, allowing larger traffic rates, more reliable simpler traffic, and traffic from more locations,

- The gradually accelerating perception of the ability of computers to create new means and approaches to communication, the emergence of social media and websites such as Twitter and Facebook to their later prominence, and global collaborations such as Wikipedia (which existed before but gained prominence as a result),

and shortly after (approximately 2007–2008 onward):

- The mobile revolution, which provided access to the Internet to much of human society of all ages, in their daily lives, and allowed them to share, discuss, and continually update, inquire, and respond.

- Non-volatile RAM rapidly grew in size and reliability, and decreased in price, becoming a commodity capable of enabling high levels of computing activity on these small handheld devices as well as solid-state drives (SSD).

- An emphasis on power efficient processor and device design, rather than purely high processing power; one of the beneficiaries of this was ARM, a British company which had focused since the 1980s on powerful but low cost simple microprocessors. ARM architecture rapidly gained dominance in the market for mobile and embedded devices.

With the call to Web 2.0, the period up to around 2004–2005 was retrospectively named and described by some as Web 1.0.

[citation needed]

Web 2.0

- "The Web we know now, which loads into a browser window in essentially static screenfuls, is only an embryo of the Web to come. The first glimmerings of Web 2.0 are beginning to appear, and we are just starting to see how that embryo might develop. The Web will be understood not as screenfuls of text and graphics but as a transport mechanism, the ether through which interactivity happens. It will [...] appear on your computer screen, [...] on your TV set [...] your car dashboard [...] your cell phone [...] hand-held game machines [...] maybe even your microwave oven."

The term resurfaced during 2002 – 2004,

[114][115][116][117] and gained prominence in late 2004 following presentations by

Tim O'Reilly and Dale Dougherty at the first

Web 2.0 Conference. In their opening remarks,

John Battelle and Tim O'Reilly outlined their definition of the "Web as Platform", where software applications are built upon the Web as opposed to upon the desktop. The unique aspect of this migration, they argued, is that "customers are building your business for you".

[118] They argued that the activities of users generating content (in the form of ideas, text, videos, or pictures) could be "harnessed" to create val

hitecture Board (IAB).[141]The Internet Research Task Force (IRTF) and the Internet Research Steering Group (IRSG), peer activities to the IETF and IESG under the general supervision of the IAB, focus on longer term research issues.[138][142]

Request for Comments (RFCs) are the main documentation for the work of the IAB, IESG, IETF, and IRTF.

RFC 1, "Host Software", was written by Steve Crocker at

UCLA in April 1969, well before the IETF was created. Originally they were technical memos documenting aspects of ARPANET development and were edited by

Jon Postel, the first

RFC Editor.

[138][143]

RFCs cover a wide range of information from proposed standards, draft standards, full standards, best practices, experimental protocols, history, and other informational topics.

[144] RFCs can be written by individuals or informal groups of individuals, but many are the product of a more formal Working Group. Drafts are submitted to the IESG either by individuals or by the Working Group Chair. An RFC Editor, ap

al, large portions are not. Lawsuits and other legal actions caused Napster in 2001, eDonkey2000 in 2005, Kazaa in 2006, and Limewire in 2010 to shut down or refocus their efforts.[209][210] The Pirate Bay, founded in Sweden in 2003, continues despite a trial and appeal in 2009 and 2010 that resulted in jail terms and large fines for several of its founders.[211] File sharing remains contentious and controversial with charges of theft of intellectual property on the one hand and charges of censorship on the other.[212][213]

Dot-com bubble

Suddenly the low price of reaching millions worldwide, and the possibility of selling to or hearing from those people at the same moment when they were reached, promised to overturn established business dogma in advertising,

mail-order sales,

customer relationship management, and many more areas. The web was a new

killer app—it could bring together unrelated buyers and sellers in seamless and low-cost ways. Entrepreneurs around the world developed new business models, and ran to their nearest

venture capitalist. While some of the new entrepreneurs had experience in business and economics, the majority were simply people with ideas, and did not manage the capital influx prudently. Additionally, many dot-com business plans were predicated on the assumption that by using the Internet, they would bypass the distribution channels of existing businesses and therefore not have to compete with them; when the established businesses with strong existing brands developed their own Internet presence, these hopes were shattered, and the newcomers were left attempting to break into markets dominated by larger, more established businesses. Many did not have the ability to do so.

The dot-com bubble burst in March 2000, with the technology heavy

NASDAQ Composite index peaking at 5,048.62 on March 10

[214] (5,132.52 intraday), more than double its value just a year before. By 2001, the bubble's deflation was running full speed. A majority of the dot-coms had ceased trading, after having burnt through their

venture capital and IPO capital, often without ever making a

profit. But despite this, the Internet continues to grow, driven by commerce, ever greater amounts of online information and knowledge and social networking.

The Internet Society

The

Internet Society (ISOC) is an international, nonprofit organization founded during 1992 "to assure the open development, evolution and use of the Internet for the benefit of all people throughout the world". With offices near Washington, DC, USA, and in Geneva, Switzerland, ISOC has a membership base comprising more than 80 organizational and more than 50,000 individual members. Members also form "chapters" based on either common geographical location or special interests. There are currently more than 90 chapters around the world.

[145]

Globalization and Internet governance in the 21st century

Since the 1990s, the

Internet's governance and organization has been of global importance to governments, commerce, civil society, and individuals. The organizations which held control of certain technical aspects of the Internet were the successors of the old ARPANET oversight and the current decision-makers in the day-to-day technical aspects of the network. While recognized as the administrators of certain aspects of the Internet, their roles and their decision-making authority are limited and subject to increasing international scrutiny and increasing objections. These objections have led to the ICANN removing themselves from relationships with first the

University of Southern California in 2000,

[147] and in September 2009, gaining autonomy from the US government by the ending of its longstanding agreements, although some contractual obligations with the U.S. Department of Commerce continued.

[148][149][150] Finally, on October 1, 2016 ICANN ended its contract with the United States Department of Commerce National Telecommunications and Information Administration (

NTIA), allowing oversight to pass to the global Internet community.

[151]

The IETF, with financial and organizational support from the Internet Society, continues to serve as the Internet's ad-hoc standards body and issues

Request for Comments.

In November 2005, the

World Summit on the Information Society, held in

Tunis, called for an

Internet Governance Forum (IGF) to be convened by

United Nations Secretary General. The IGF opened an ongoing, non-binding conversation among stakeholders representing governments, the private sector, civil society, and the technical and academic communities about the future of Internet governance. The first IGF meeting was held in October/November 2006 with follow up meetings annually thereafter.

[152] Since WSIS, the term "Internet governance" has been broadened beyond narrow technical concerns to include a wider range of Internet-related policy issues.

[153][154]

Politicization of the Internet

Due to its prominence and immediacy as an effective means of mass communication, the Internet has also become more

politicized as it has grown. This has led in turn, to discourses and activities that would once have taken place in other ways, migrating to being mediated by internet.

- The spreading of ideas and opinions;

- Recruitment of followers, and "coming together" of members of the public, for ideas, products, and causes;

- Providing and widely distributing and sharing information that might be deemed sensitive or relates to whistleblowing (and efforts by specific countries to prevent this by censorship);

- Criminal activity and terrorism (and resulting law enforcement use, together with its facilitation by mass surveillance);

- Politically-motivated fake news.

The Internet Society

The

Internet Society (ISOC) is an international, nonprofit organization founded during 1992 "to assure the open development, evolution and use of the Internet for the benefit of all people throughout the world". With offices near Washington, DC, USA, and in Geneva, Switzerland, ISOC has a membership base comprising more than 80 organizational and more than 50,000 individual members. Members also form "chapters" based on either common geographical location or special interests. There are currently more than 90 chapters around the world.

[145]

Globalization and Internet governance in the 21st century

Since the 1990s, the

Internet's governance and organization has been of global importance to governments, commerce, civil society, and individuals. The organizations which held control of certain technical aspects of the Internet were the successors of the old ARPANET oversight and the current decision-makers in the day-to-day technical aspects of the network. While recognized as the administrators of certain aspects of the Internet, their roles and their decision-making authority are limited and subject to increasing international scrutiny and increasing objections. These objections have led to the ICANN removing themselves from relationships with first the

University of Southern California in 2000,

[147] and in September 2009, gaining autonomy from the US government by the ending of its longstanding agreements, although some contractual obligations with the U.S. Department of Commerce continued.

[148][149][150] Finally, on October 1, 2016 ICANN ended its contract with the United States Department of Commerce National Telecommunications and Information Administration (

NTIA), allowing oversight to pass to the global Internet community.

[151]

The IETF, with financial and organizational support from the Internet Society, continues to serve as the Internet's ad-hoc standards body and issues

Request for Comments.

In November 2005, the

World Summit on the Information Society, held in

Tunis, called for an

Internet Governance Forum (IGF) to be convened by

United Nations Secretary General. The IGF opened an ongoing, non-binding conversation among stakeholders representing governments, the private sector, civil society, and the technical and academic communities about the future of Internet governance. The first IGF meeting was held in October/November 2006 with follow up meetings annually thereafter.

[152] Since WSIS, the term "Internet governance" has been broadened beyond narrow technical concerns to include a wider range of Internet-related policy issues.

[153][154]

Politicization of the Internet

Due to its prominence and immediacy as an effective means of mass communication, the Internet has also become more

politicized as it has grown. This has led in turn, to discourses and activities that would once have taken place in other ways, migrating to being mediated by internet.

- The spreading of ideas and opinions;

- Recruitment of followers, and "coming together" of members of the public, for ideas, products, and causes;

- Providing and widely distributing and sharing information that might be deemed sensitive or relates to whistleblowing (and efforts by specific countries to prevent this by censorship);

- Criminal activity and terrorism (and resulting law enforcement use, together with its facilitation by mass surveillance.

Net neutrality

-

-

On April 23, 2014, the

Federal Communications Commission (FCC) was reported to be considering a new rule that would permit

Internet service providers to offer content providers a faster track to send content, thus reversing their earlier

net neutrality position.

[155][156][157] A possible solution to net neutrality concerns may be

municipal broadband, according to

Professor Susan Crawford, a legal and technology expert at

Harvard Law School.

[158] On May 15, 2014, the FCC decided to consider two options regarding Internet services: first, permit fast and slow broadband lanes, thereby compromising net neutrality; and second, reclassify broadband as a

telecommunication service, thereby preserving net neutrality.

[159][160]On November 10, 2014,

President Obama recommended the FCC reclassify broadband Internet service as a telecommunications service in order to preserve

net neutrality.

[161][162][163] On January 16, 2015,

Republicans presented legislation, in the form of a

U.S. Congress HR discussion draft bill, that makes concessions to net neutrality but prohibits the FCC from accomplishing the goal or enacting any further regulation affecting

Internet service providers (ISPs).

[164][165] On January 31, 2015,

AP News reported that the FCC will present the notion of applying ("with some caveats")

Title II (common carrier) of the

Communications Act of 1934 to the internet in a vote expected on February 26, 2015.

[166][167][168][169][170] Adoption of this notion would reclassify internet service from one of information to one of

telecommunications[171] and, according to

Tom Wheeler, chairman of the FCC, ensure

net neutrality.

[172][173] The FCC is expected to enforce net neutrality in its vote, according to

The New York Times.

[174][175]

On March 12, 2015, the FCC released the specific details of the net neutrality rules.

[180][181][182] On April 13, 2015, the FCC published the final rule on its new "

Net Neutrality" regulations.

[183][184]

On December 14, 2017, the F.C.C Repealed their March 12, 2015 decision by a 3–2 vote regarding net neutrality rules.

[185]

Use and culture

Email and Usenet

-

-

The ARPANET computer network made a large contribution to the evolution of electronic mail. An experimental inter-system transferred mail on the ARPANET shortly after its creation.

[187] In 1971

Ray Tomlinson created what was to become the standard Internet electronic mail addressing format, using the

@ sign to separate mailbox names from host names.

[188]

A number of protocols were developed to deliver messages among groups of time-sharing computers over alternative transmission systems, such as

UUCP and

IBM's

VNET email system. Email could be passed this way between a number of networks, including

ARPANET,

BITNET and

NSFNET, as well as to hosts connected directly to other sites via UUCP. See the

history of SMTP protocol.

In addition, UUCP allowed the publication of text files that could be read by many others. The News software developed by Steve Daniel and

Tom Truscott in 1979 was used to distribute news and bulletin board-like messages. This quickly grew into discussion groups, known as

newsgroups, on a wide range of topics. On ARPANET and NSFNET similar discussion groups would form via

mailing lists, discussing both technical issues and more culturally focused topics (such as science fiction, discussed on the sflovers mailing list).

During the early years of the Internet, email and similar mechanisms were also fundamental to allow people to access resources that were not available due to the absence of online connectivity. UUCP was often used to distribute files using the 'alt.binary' groups. Also,

FTP e-mail gatewaysallowed people that lived outside the US and Europe to download files using ftp commands written inside email messages. The file was encoded, broken in pieces and sent by email; the receiver had to reassemble and decode it later, and it was the only way for people living overseas to download items such as the earlier Linux versions using the slow dial-up connections available at the time. After the popularization of the Web and the HTTP protocol such tools were slowly abandoned.

From Gopher to the WWW

-

-

As the Internet grew through the 1980s and early 1990s, many people realized the increasing need to be able to find and organize files and information. Projects such as

Archie,

Gopher,

WAIS, and the FTP Archive list attempted to create ways to organize distributed data. In the early 1990s, Gopher, invented by

Mark P. McCahill offered a viable alternative to the

World Wide Web. However, in 1993 the World Wide Web saw many advances to indexing and ease of access through search engines, which often neglected Gopher and Gopherspace. As popularity increased through ease of use, investment incentives also grew until in the middle of 1994 the WWW's popularity gained the upper hand. Then it became clear that Gopher and the other projects were doomed fall short.

[189]

In 1989, while working at

CERN,

Tim Berners-Lee invented a network-based implementation of the hypertext concept. By releasing his invention to public use, he encouraged widespread use.

[192] For his work in developing the World Wide Web, Berners-Lee received the

Millennium technology prize in 2004.

[193] One early popular web browser, modeled after

HyperCard, was

ViolaWWW.

Mosaic was superseded in 1994 by Andreessen's

Netscape Navigator, which replaced Mosaic as the world's most popular browser. While it held this title for some time, eventually competition from

Internet Explorer and a variety of other browsers almost completely displaced it. Another important event held on January 11, 1994, was

The Superhighway Summit at

UCLA's Royce Hall. This was the "first public conference bringing together all of the major industry, government and academic leaders in the field [and] also began the national dialogue about the

Information Superhighway and its implications."

[197]

Search engines

-

-

Even before the World Wide Web, there were search engines that attempted to organize the Internet. The first of these was the

Archie search engine from McGill University in 1990, followed in 1991 by

WAIS and Gopher. All three of those systems predated the invention of the World Wide Web but all continued to index the Web and the rest of the Internet for several years after the Web appeared. There are still Gopher servers as of 2006, although there are a great many more web servers.

As the Web grew,

search engines and

Web directories were created to track pages on the Web and allow people to find things. The first full-text Web search engine was

WebCrawler in 1994. Before WebCrawler, only Web page titles were searched. Another early search engine,

Lycos, was created in 1993 as a university project, and was the first to achieve commercial success. During the late 1990s, both Web directories and Web search engines were popular—

Yahoo! (founded 1994) and

Altavista (founded 1995) were the respective industry leaders. By August 2001, the directory model had begun to give way to search engines, tracking the rise of

Google (founded 1998), which had developed new approaches to

relevancy ranking. Directory features, while still commonly available, became after-thoughts to search engines.

Database size, which had been a significant marketing feature through the early 2000s, was similarly displaced by emphasis on relevancy ranking, the methods by which search engines attempt to sort the best results first. Relevancy ranking first became a major issue circa 1996, when it became apparent that it was impractical to review full lists of results. Consequently,

algorithms for relevancy ranking have continuously improved. Google's

PageRank method for ordering the results has received the most press, but all major search engines continually refine their ranking methodologies with a view toward improving the ordering of results. As of 2006, search engine rankings are more important than ever, so much so that an industry has developed ("

search engine optimizers", or "SEO") to help web-developers improve their search ranking, and an entire body of

case law has developed around matters that affect search engine rankings, such as use of

trademarks in

metatags. The sale of search rankings by some search engines has also created controversy among librarians and consumer advocates.

[202]

File sharing

-

-

Resource or file sharing has been an important activity on computer networks from well before the Internet was established and was supported in a variety of ways including

bulletin board systems (1978),

Usenet (1980),

Kermit (1981), and many others. The

File Transfer Protocol (FTP) for use on the Internet was standardized in 1985 and is still in use today.

[205] A variety of tools were developed to aid the use of FTP by helping users discover files they might want to transfer, including the

Wide Area Information Server (WAIS) in 1991,

Gopher in 1991,

Archie in 1991,

Veronica in 1992,

Jughead in 1993,

Internet Relay Chat (IRC) in 1988, and eventually the

World Wide Web (WWW) in 1991 with

Web directories and

Web search engines.

In 1999,

Napster became the first

peer-to-peer file sharing system.

[206] Napster used a central server for indexing and peer discovery, but the storage and transfer of files was decentralized. A variety of peer-to-peer file sharing programs and services with different levels of decentralization and

anonymity followed, including:

Gnutella,

eDonkey2000, and

Freenet in 2000,

FastTrack,

Kazaa,

Limewire, and

BitTorrent in 2001, and

Poisoned in 2003.

[207]

All of these tools are general purpose and can be used to share a wide variety of content, but sharing of music files, software, and later movies and videos are major uses.

[208] And while some of this sharing is legal, large portions are not. Lawsuits and other legal actions caused Napster in 2001, eDonkey2000 in 2005,

Kazaa in 2006, and Limewire in 2010 to shut down or refocus their efforts.

[209][210] The Pirate Bay, founded in Sweden in 2003, continues despite a

trial and appeal in 2009 and 2010 that resulted in jail terms and large fines for several of its founders.

[211] File sharing remains contentious and controversial with charges of theft of

intellectual property on the one hand and charges of

censorship on the other.

[212][213]

Dot-com bubble

-

-

Suddenly the low price of reaching millions worldwide, and the possibility of selling to or hearing from those people at the same moment when they were reached, promised to overturn established business dogma in advertising,

mail-order sales,

customer relationship management, and many more areas. The web was a new

killer app—it could bring together unrelated buyers and sellers in seamless and low-cost ways. Entrepreneurs around the world developed new business models, and ran to their nearest

venture capitalist. While some of the new entrepreneurs had experience in business and economics, the majority were simply people with ideas, and did not manage the capital influx prudently. Additionally, many dot-com business plans were predicated on the assumption that by using the Internet, they would bypass the distribution channels of existing businesses and therefore not have to compete with them; when the established businesses with strong existing brands developed their own Internet presence, these hopes were shattered, and the newcomers were left attempting to break into markets dominated by larger, more established businesses. Many did not have the ability to do so.

The dot-com bubble burst in March 2000, with the technology heavy

NASDAQ Composite index peaking at 5,048.62 on March 10

[214] (5,132.52 intraday), more than double its value just a year before. By 2001, the bubble's deflation was running full speed. A majority of the dot-coms had ceased trading, after having burnt through their

venture capital and IPO capital, often without ever making a

profit. But despite this, the Internet continues to grow, driven by commerce, ever greater amounts of online information and knowledge and social networking.

Mobile phones and the Internet

-

-

The first mobile phone with Internet connectivity was the

Nokia 9000 Communicator, launched in Finland in 1996. The viability of Internet services access on mobile phones was limited until prices came down from that model, and network providers started to develop systems and services conveniently accessible on phones.

NTT DoCoMo in Japan launched the first mobile Internet service,

i-mode, in 1999 and this is considered the birth of the mobile phone Internet services. In 2001, the mobile phone email system by Research in Motion (now

BlackBerry Limited) for their

BlackBerry product was launched in America. To make efficient use of the small screen and

tiny keypad and one-handed operation typical of mobile phones, a specific document and networking model was created for mobile devices, the

Wireless Application Protocol (WAP). Most mobile device Internet services operate using WAP. The growth of mobile phone services was initially a primarily Asian phenomenon with Japan, South Korea and Taiwan all soon finding the majority of their Internet users accessing resources by phone rather than by PC.

[citation needed] Developing countries followed, with India, South Africa, Kenya, the Philippines, and Pakistan all reporting that the majority of their domestic users accessed the Internet from a mobile phone rather than a PC. The European and North American use of the Internet was influenced by a large installed base of personal computers, and the growth of mobile phone Internet access was more gradual, but had reached national penetration levels of 20–30% in most Western countries.

[215] The cross-over occurred in 2008, when more Internet access devices were mobile phones than personal computers. In many parts of the developing world, the ratio is as much as 10 mobile phone users to one PC user.

[216]

Web technologies

-

Historiography

-

There are nearly insurmountable problems in supplying a

historiography of the Internet's development. The process of digitization represents a twofold challenge both for historiography in general and, in particular, for historical communication research.

[217] A sense of the difficulty in documenting early developments that led to the internet can be gathered from the quote:

The Arpanet period is somewhat well documented because the corporation in charge –

BBN – left a physical record. Moving into the

NSFNET era, it became an extraordinarily decentralized process. The record exists in people's basements, in closets. ... So much of what happened was done verbally and on the basis of individual trust.